BELLEVUE, Wash.–(BUSINESS WIRE)–Buurst, a leading enterprise-class data performance company, today announced the availability of its flagship product, SoftNAS in the Microsoft Azure Marketplace, an online store providing applications and services for use on Azure. Buurst customers can now take advantage of the productive and trusted Azure cloud platform, with streamlined deployment and management.

Three Technology Trends Helping to Revive the Oil and Gas Industry

My career has somehow always aligned with oil and gas technology. From my time at Sun Microsystems to Microsoft to AWS and now Buurst. I can say that 2020 has been one of the most painful years. Starting with a price war between Saudi Arabia and Russia followed by a global slowdown from Covid-19 to the reduced cost of competing energy sources. Ouch! So how do we start the recovery?

Here are three important technology trends to help Oil and Gas bounce back.

Trend One: The Cloud

We all know drilling dry holes is no longer acceptable. Leveraging geospatial application technology like Halliburton Decision Space 365® (Landmark), Schlumberger Petrel®, or IHS Markit Kingdom® have become table stakes to ensuring you not only know the best place to drill, you know the right place to drill.

Most energy companies have invested $10s of millions of dollars into building world-class data centers dedicated to this work. These investments are essential and are of exact strategic value. But the costs keep going up, and you need more and more IT and security people to manage your investment (it’s a dangerous world out there). Worst of all, you get to replace this investment every three years if you want to stay competitive. What do you do when you don’t have $20M to retool? Today there is an answer: Move the workloads to the cloud.

Why the cloud? You get the fastest and most up to date processing power without the need to buy the infrastructure. Moving to the cloud lets you move your investment from capital expense to an operating expense that you pay for by the hour, all backed and secured by companies like Microsoft (Azure) and Amazon (AWS). Moving to the cloud is happening today, and it’s happening fast. We see our Energy business grow more quickly this year than over the past seven years. There is a tipping point for the cloud and 2020.

Trend Two: Lift and Shift

Saving investment dollars, closing datacenter, and simplifying your IT footprint is a crucial goal of moving to the cloud. Companies often take two approaches. The first approach is to move specific workloads that are data and processing-intensive. Geospatial applications are great examples. The second approach is to focus on closing your datacenters. Many companies have thousands of applications in their data centers, and the prospect of moving these can be daunting. Migrating an application or an entire data center is commonly referred to as Lift & Shift. The good news is that 80% of these apps will go with no or very few issues. So now, what do you do with the 20% of the applications that are hard to move? If the goal is to close the data center, you can’t finish till all the applications are migrated. If the data center is still open, you cant achieve the cost savings. Your IT infrastructure will be more complicated, making the hard to move apps move is essential.

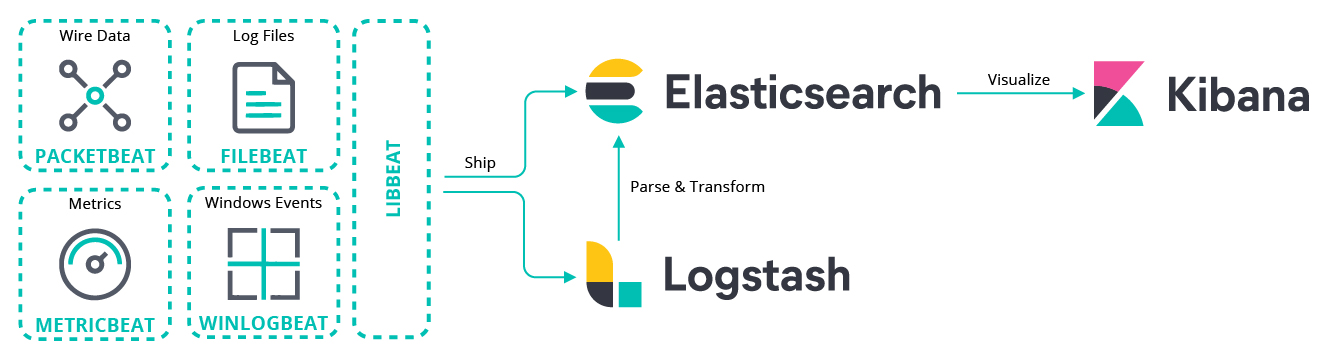

Many companies go down the path of rewriting these hard to move applications, but it’s unnecessary. Mostly these apps don’t work in the cloud because they leverage protocols that are not supported in the cloud or are latency-sensitive. The number one most common protocol that is unsupported in the cloud is iSCSI. There are solutions here, and it’s essential to leverage them for these hard to move apps. Buurst SoftNAS is a great example.

Legacy SQL Server workloads often fall into this category, and the countless instances across an enterprise could take all your DevOps resources years to rework. Don’t let your DevOps resources work on legacy workloads. These expensive and vital resources should be building the applications of the future. Leverage cloud storage solutions that support the protocols you’re using and move forward.

Trend Three: Cross-platform business partnerships

So this trend is a little controversial but essential. If you ask any cloud vendor about a multi-cloud strategy, they will always tell you just to pick one, and make sure it’s them. There are some excellent reasons to choose one cloud, and it’s worth spending time to look before you make the decision. The trend we’re seeing is to pick a solution that works in different clouds, so moving will not require reengineering your infrastructure.

A VMware hypervisor is a great example. You probably use it in your data center today, so moving it is a straight forward effort. Storage is often overlooked and becomes the “lock-in” element of choice for cloud vendors. Making sure you know what makes a cloud sticky is essential. If you know going in, you can avoid making costly mistakes. Fortunately, there are many great partners like SoftwareONE, Kaskade.Cloud, CANCOM, VSTECS, or LANStatus, to name a few with cloud architects that can help you manage this part of your transition.

BP recently said that oil demand may never rebound to pre-pandemic highs as the world shifts to renewables. I don’t know if that’s true, but for now, there is a clear need to rethink spending and change resources to take advantage of the learning from other industries. No one ever wants to be first to take the plunge. Fortunately, companies like Halliburton, Schlumberger, Petronas, IHS Markit, and ExxonMobil have all moved and are leveraging these strategies. Come on in, the water’s fine.